Code Review Automation with Language Models#

Introduction#

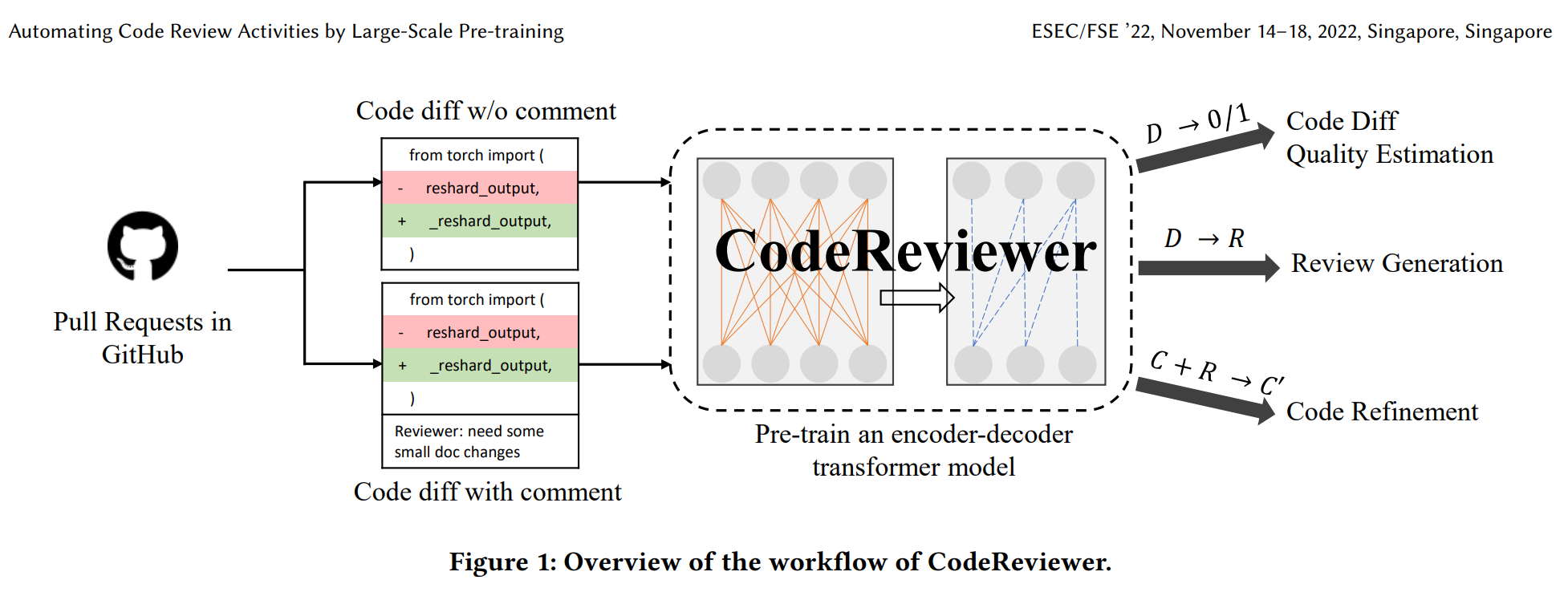

In this series of Jupyter notebooks, we embark on a journey to collect code review data from GitHub repositories and perform code review predictions using language models. Our primary goal is to explore the capabilities of the CodeReviewer model in generating code reviews and evaluate its performance.

Collecting Code Review Data#

In this initial notebook, we dive into the process of collecting code review data from GitHub repositories. We leverage the PyGithub library to interact with the GitHub API, ensuring seamless data retrieval.

Three prominent repositories, namely microsoft/vscode, JetBrains/kotlin, and transloadit/uppy, are selected for

data collection due to their popularity and rich code review history. Additionally, we are going to use data from the

original CodeReviewer dataset msg-test that is provided by the authors of [LLG+22].

CodeReviewer Model Inference#

The second notebook focuses on generating code reviews using the microsoft/codereviewer model. We delve into the

tokenization and dataset preparation process, emphasizing the importance of specialized tokens.

We explore the model inference process, employing both a HuggingFace pre-trained checkpoint and a fine-tuned CodeReviewer model. The fine-tuning process details are outlined, showcasing parameters and resources used. Model predictions are saved.

Predictions Evaluation#

In this notebook, we assess the quality of code review predictions generated by the models. Both HuggingFace pre-trained and fine-tuned models are evaluated across different datasets, shedding light on their performance.

Qualitative assessment is conducted to gain insights into how the models generate code reviews. We present samples of code, along with predictions from both models, enabling a visual comparison with human reviews.

Lastly, we quantitatively evaluate the models’ performance using BLEU-4 scores. We calculate scores for each dataset, providing a comprehensive overview of how well the models align with human reviews.

Acknowledgements#

Incredible thanks to the authors of CodeReviewer for their scientific contributions. This work is basically an in-depth exploration of their research, and I am grateful for their efforts.

Table of Contents#

Bibliography#

- p4v

CodeBERT CodeReviewer - a Hugging Face Space by p4vv37. URL: https://huggingface.co/spaces/p4vv37/CodeBERT_CodeReviewer (visited on 2023-09-13).

- LLG+22

Zhiyu Li, Shuai Lu, Daya Guo, Nan Duan, Shailesh Jannu, Grant Jenks, Deep Majumder, Jared Green, Alexey Svyatkovskiy, Shengyu Fu, and others. Codereviewer: pre-training for automating code review activities. arXiv preprint arXiv:2203.09095, 2022.